On May 28 at the COMPUTEX conference in Taipei, NVIDIA announced a host of new hardware and networking tools, many focused around enabling artificial intelligence. The new lineup includes the 1-exaflop supercomputer, the DGX GH200 class; over 100 system configuration options designed to help companies host AI and high-performance computing needs; a modular reference architecture for accelerated servers; and a cloud networking platform built around Ethernet-based AI clouds.

The announcements — and the first public talk co-founder and CEO Jensen Huang has given since the start of the COVID-19 pandemic — helped propel NVIDIA in sight of the coveted $1 trillion market capitalization. Doing so would make it the first chipmaker to ascend to within the realm of tech giants like Microsoft and Apple.

Jump to:

- What makes the DGX GH200 for AI supercomputers different

- Alternatives to NVIDIA’s supercomputing chips

- Enterprise library supports AI deployments

- Faster networking for AI in the cloud

- MGX Server Specification coming soon

- What will change about data center management?

- Other news from NVIDIA at COMPUTEX

What makes the DGX GH200 for AI supercomputers different?

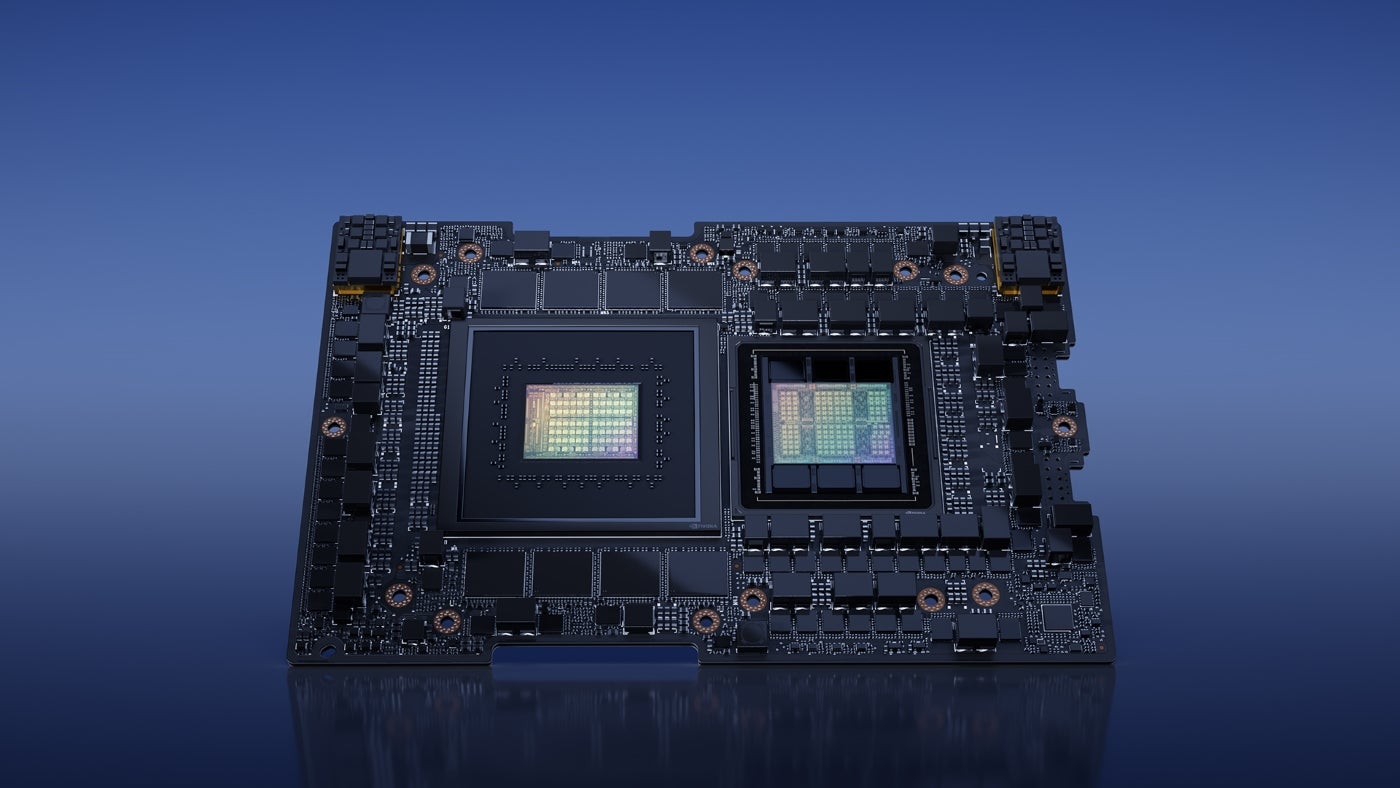

NVIDIA’s new class of AI supercomputers take advantage of the GH200 Grace Hopper Superchips, and the NVIDIA NVLink Switch System interconnect to run generative AI language applications, recommender systems (machine learning engines for predicting what a user might rate a product or piece of content), and data analytics workloads (Figure A). It’s the first product to use both the high-performance chips and the novel interconnect.

Figure A

NVIDIA will offer the DGX GH200 to Google Cloud, Meta and Microsoft first. Next, it plans to offer the DGX GH200 design as a blueprint to cloud service providers and other hyperscalers. It is expected to be available by the end of 2023.

The DGX GH200 is intended to let organizations run AI from their own data centers. 256 GH200 superchips in each unit provide 1 exaflop of performance and 144 terabytes of shared memory.

NVIDIA explained in the announcement that the NVLink Switch System enables the GH200 chips to bypass a conventional CPU-to-GPU PCIe connection, increasing the bandwidth while reducing power consumption.

Mark Lohmeyer, vice president of compute at Google Cloud, pointed out in an NVIDIA press release that the new Hopper chips and NVLink Switch System can “address key bottlenecks in large-scale AI.”

“Training large AI models is traditionally a resource- and time-intensive task,” said Girish Bablani, corporate vice president of Azure infrastructure at Microsoft, in the NVIDIA press release. “The potential for DGX GH200 to work with terabyte-sized datasets would allow developers to conduct advanced research at a larger scale and accelerated speeds.”

NVIDIA will also keep some supercomputing capability for itself; the company plans to work on its own supercomputer called Helios, powered by four DGX GH200 systems.

Alternatives to NVIDIA’s supercomputing chips

There aren’t many companies or customers aiming for the AI and supercomputing speeds NVIDIA’s Grace Hopper chips enable. NVIDIA’s major rival is AMD, which produces the Instinct MI300. This chip includes both CPU and GPU cores, and is expected to run the 2 exaflop El Capitan supercomputer.

Intel offered the Falcon Shores chip, but it recently announced that this would not be coming out with both a CPU and GPU. Instead, it has changed the roadmap to focus on AI and high-powered computing, but not include CPU cores.

Enterprise library supports AI deployments

Another new service, the NVIDIA AI Enterprise library, is designed to help organizations access the software layer of the new AI offerings. It includes more than 100 frameworks, pretrained models and development tools. These frameworks are appropriate for the development and deployment of production AI including generative AI, computer vision, speech AI and others.

On-demand support from NVIDIA AI experts will be available to help with deploying and scaling AI projects. It can help deploy AI on data center platforms from VMware and Red Hat or on NVIDIA-Certified Systems.

SEE: Are ChatGPT or Google Bard right for your business?

Faster networking for AI in the cloud

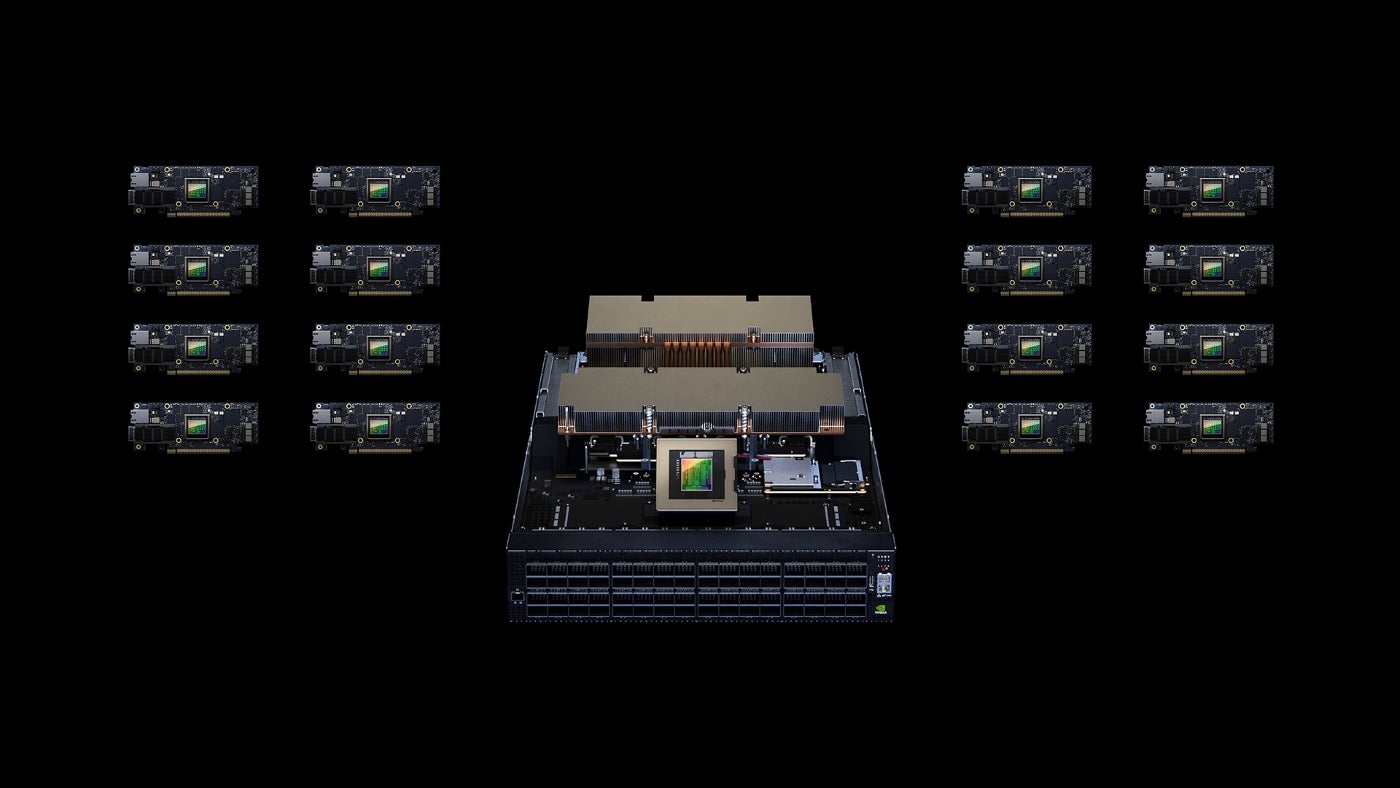

NVIDIA wants to help speed up Ethernet-based AI clouds with the accelerated networking platform Spectrum-X (Figure B).

Figure B

“NVIDIA Spectrum-X is a new class of Ethernet networking that removes barriers for next-generation AI workloads that have the potential to transform entire industries,” said Gilad Shainer, senior vice president of networking at NVIDIA, in a press release.

Spectrum-X can support AI clouds with 256 200Gbps ports connected by a single switch or 16,000 ports in a two-tier spine-leaf topology.

Spectrum-X does so by utilizing Spectrum-4, a 51Tbps Ethernet switch built specifically for AI networks. Advanced RoCE extensions bringing together the Spectrum-4 switches, BlueField-3 DPUs and NVIDIA LinkX optics create an end-to-end 400GbE network optimized for AI clouds, NVIDIA said.

Spectrum-X and its related products (Spectrum-4 switches, BlueField-3 DPUs and 400G LinkX optics) are available now, including ecosystem integration with Dell Technologies, Lenovo and Supermicro.

MGX Server Specification coming soon

In more news regarding accelerated performance in data centers, NVIDIA has released the MGX server specification. It is a modular reference architecture for system manufacturers working on AI and high-performance computing.

“We created MGX to help organizations bootstrap enterprise AI,” said Kaustubh Sanghani, vice president of GPU products at NVIDIA, in a press release.

Manufacturers will be able to specify their GPU, DPU and CPU preferences within the initial, basic system architecture. MGX is compatible with current and future NVIDIA server form factors, including 1U, 2U, and 4U (air or liquid cooled).

SoftBank is now working on building a network of data centers in Japan which will use the GH200 Superchips and MGX systems for5G services and generative AI applications.

QCT and Supermicro have adopted MGX and will have it on the market in August.

What will change about data center management?

For businesses, adding high-performance computing or AI to data centers will require changes to physical infrastructure designs and systems. Whether and how much to do so depends on the individual situation. Joe Reele, vice president, solution architects at Schneider Electric, said many larger organizations are already on their way to making their data centers ready for AI and machine learning.

“Power density and heat dissipation are the drivers behind this transition,” Reele said in an email to TechRepublic. “Additionally, the way the IT kit is architected for AI/ML in the white space is a driver as well when considering the need for things such as shorter cable runs and clustering.”

Operators of enterprise-owned data centers should decide based on their business priorities whether replacing servers and upgrading IT equipment to support generative AI workloads makes sense for them, Reele said.

“Yes, new servers will be more efficient and pack more punch when it comes to compute power, but operators must consider elements such as compute utilization, carbon emissions, and of course space, power, and cooling. While some operators may need to adjust their server infrastructure strategies, many will not need to make these massive updates in the near term,” he said.

Other news from NVIDIA at COMPUTEX

NVIDIA announced a variety of other new products and services based around artificial intelligence:

- WPP and NVIDIA Omniverse came together to announce a new engine for marketing. The content engine will be able to generate video and images for advertising.

- A smart manufacturing platform, Metropolis for Factories, can create and manage custom quality-control systems.

- The Avatar Cloud Engine (ACE) for Games is a foundry service for video game developers. It enables animated characters to call on AI for speech generation and animation.